Healthcare news for decision-makers

Knowing the healthcare headlines is easy.

Understanding what they mean for the business? That’s the hard part.

Healthcare Brew is a free newsletter breaking down the forces shaping the healthcare industry—from pharmaceutical developments and health startups to policy shifts, regulation, and tech changing how hospitals and providers operate.

No clinical deep dives. No overstuffed jargon. No guessing what actually matters. Just clear, focused coverage built for the people making decisions behind the scenes.

Join 135K+ administrators and healthcare professionals staying informed, for free.

Hello!

Welcome back.

AI in medicine is moving fast, but the way we regulate it hasn’t kept up.

A recent Nature paper —“Innovating global regulatory frameworks for generative AI in medical devices is an urgent priority” highlights a key issue: current frameworks were designed for traditional software, not for models like LLMs that don’t always produce de same output.

This makes evaluating safety and performance more complex using current approaches.

Let’s get into this week’s issue

🤖 AIBytes

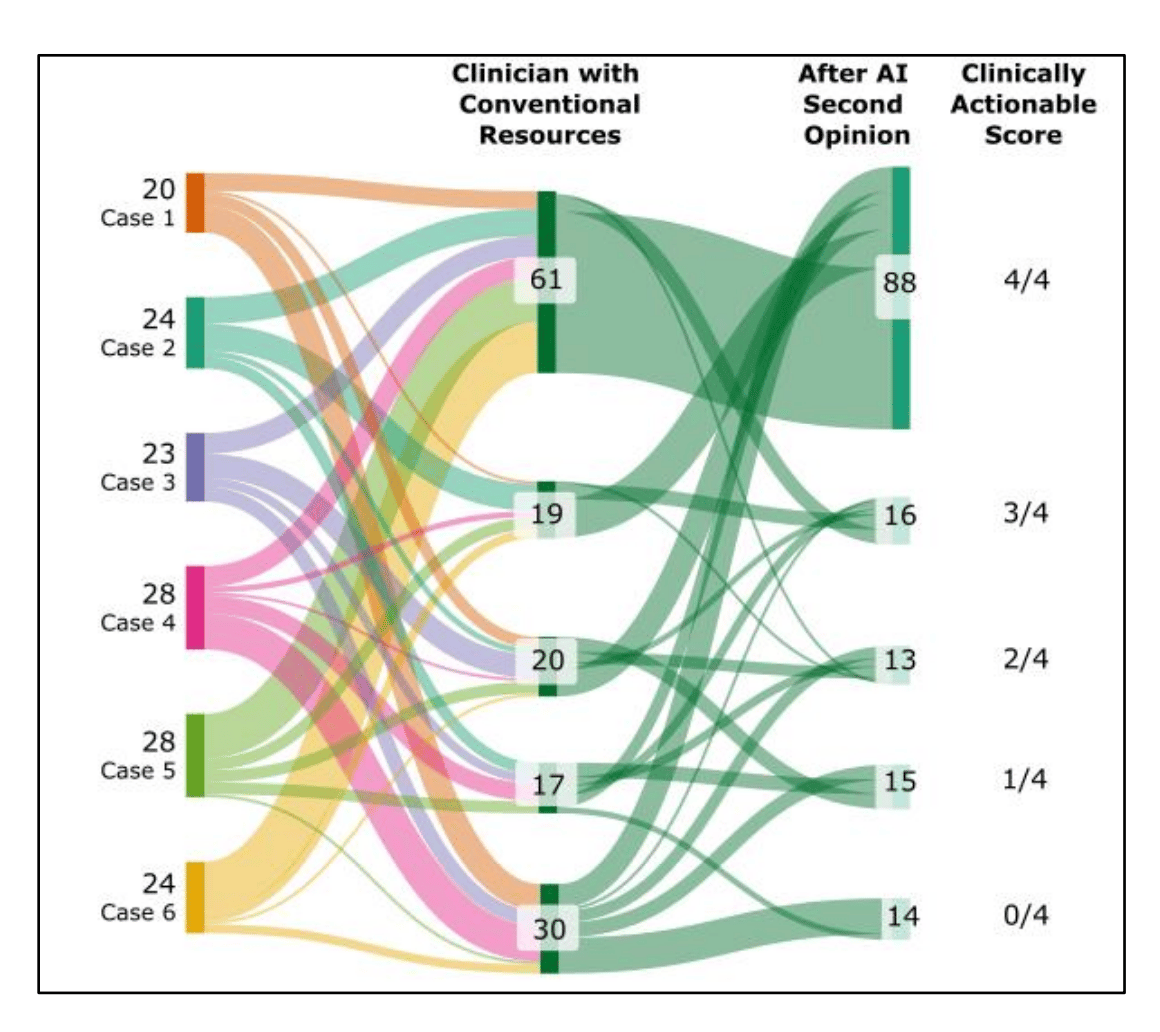

Researchers studied how LLMs behave when they see clinician reasoning, testing whether they truly reason or simply repeat what clinicians say.

🔬 Methods

Design: Comparative evaluation (LLM alone vs LLM with clinician reasoning)

Dataset: 61 NEJM clinical cases (2024–2026)

Intervention: Models first analyzed cases independently, then re-analyzed after exposure to expert clinician reasoning (including correct diagnosis and next steps).

Outcomes:

Diagnostic overlap

Next-step recommendation overlap

Evidence of independent reasoning vs content echoing.

📊 Results

After clinicians reviewed the AI suggestions, agreement increased:

Diagnosis overlap (≥3 matches): 66% → 94%

Next steps overlap (≥3 matches): 20% → 54%

The AI started repeating more of the clinician’s ideas (≥30% increase), suggesting it may be echoing rather than independently reasoning.

Key limitation:

Improvement may reflect true reasoning OR copying, and the study cannot fully separate these.

Failure modes identified:

Silent content echoing: model repeats clinician input but presents it as its own reasoning.

Propagation of harmful steps: incorrect clinical actions may be carried forward without attribution.

🔑 Key Takeaways

LLMs may copy clinician input instead of thinking independently.

Echoing is harder to detect than simple agreement and can introduce hidden risk.

Evaluation must move beyond accuracy to assess real clinician–AI interaction.

🔗 Everett SS, et al. From Tool to Teammate: A Randomized Controlled Trial of Clinician-AI Collaborative Workflows for Diagnosis. Preprint. 2025. doi:10.1101/2025.06.07.25329176

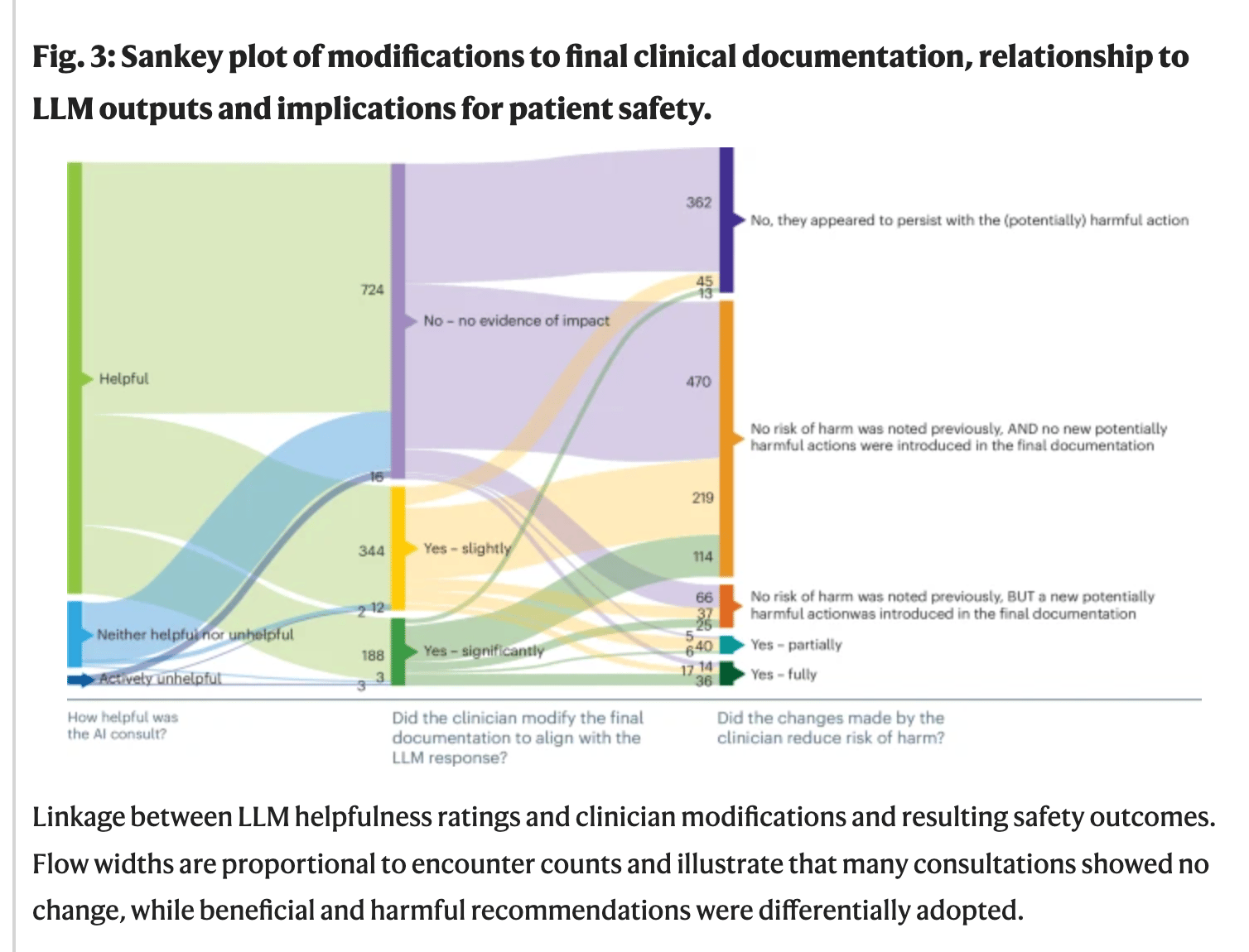

Researchers evaluated a GPT-4o–based clinical decision support tool embedded in primary care clinics to assess its safety and usefulness in real-world practice.

🔬 Methods

Retrospective evaluation of an AI tool embedded in the EHR (electronic health record) across 16 primary care clinics in Kenya.

AI generated diagnostic and management suggestions during patient visits.

1,469 patient encounters were reviewed by a physician panel.

Outcomes included diagnostic and clinical reasoning, safety, alignment with guidelines, and clinician response to AI output.

📊 Results

The tool was used in 36,670 of 78,366 consultations (46.8%)..

99% of recommendations aligned with local clinical guidelines.

3.4% of outputs contained hallucinations, mostly minor errors such as misexpanded acronyms or drug names.

7.8% included potentially harmful recommendations.

Harmful recommendations were more likely to be followed, proportionally, than beneficial ones.

Total cost was $7.81, or about $0.005 per encounter.

🔑 Key Takeaways

This study reflects real-world clinical use, not a simulation.

The AI often produced useful, guideline-aligned suggestions.

Still, clinically meaningful errors occurred, including harmful recommendations.

Clinicians remain essential because they must judge when AI advice is helpful and when it is unsafe.

AI shows promise, but it is not ready for independent clinical decision-making.

🔗Agweyu A, Mwaniki P, Musau W, et al. Safety of a large language model-based clinical decision support system in African primary healthcare. Nature Health. 2026. doi:10.1038/s44360-026-00082-5

🦾TechTools

AI virtual care assistants to support patients after surgery.

Provides recovery guidance and monitors symptoms at home, alerting care teams if something is off.

Designed as a regulated medical device, aiming to safely extend care beyond hospital discharge.

An AI writing assistant designed for research and academic work.

Helps you write, organize ideas, and add citations as you go.

Can read papers or PDFs and suggest content based on real sources.

An AI tool that helps you get things done, not just answer questions.

It can connect to apps (like email or calendar) and help complete tasks automatically.

Think of it as a digital assistant that can plan and take action.

Not for clinical use: Do not use with EHRs or patient data due to security and privacy risks.

🧬AIMedily Snaps

NEJM: From promise to practice: The next era of AI in health care (Link).

Microsoft: Copilot for health (Link).

AI can predict preemies’ path, Stanford Medicine-led study shows (Link).

Nature: Innovating global regulatory frameworks for generative AI in medical devices is an urgent priority (Link).

Harvard: AI is speeding into healthcare. Who should regulate it? (Link).

FDA AI-enabled medical devices: Radiology still dominates (Link).

🧪Research Signals

AI based rehabilitation: the way forward in addressing unmet needs in musculoskeletal disease (Link).

Keeping health equity at the forefront of the AI revolution in medicine and health (Link).

Digital twins in Neuro-oncology: A Systematic Review of Current Implementations, Technical Strategies, and Clinical Applications (Link).

Physical AI goes to the operating room: are we ready for the Surgical Data Factory? (Link).

External Validation of a Commercially Available AI Tool for Nasogastric Tube Position Decision Support in the NHS: A Prospective Silent Trial (Link).

🧩TriviaRX

Two clinicians enter the exact same prompt into the same medical AI model—same patient, same data, same question. What is most likely to happen?

A. The AI will give exactly the same answer

B. The AI will provide the same answer every time if the input is identical

C. The AI may give slightly different answers each time

D. The AI will always follow the same clinical reasoning path

Now, let’s check last week’s answer to see if you got it right.

✅ A) Changes in skin odor noticed before diagnosis

A woman noticed a change in body odor years before Parkinson’s diagnosis, which led researchers to identify chemical changes in skin as potential early biomarkers.

That’s it for today.

As always, thank you for being here.

Staying ahead in medical AI is easier when your circle is too. Share AIMedily 😊.

Until next week.

Itzel Fer, MD PM&R

Forwarded this email? Sign up here

P.S. Enjoying AIMedily? 👉 Write a review here (it takes less than a minute).